Language models, a subset of artificial intelligence, focus on interpreting and generating human-like text. These models are integral to various …

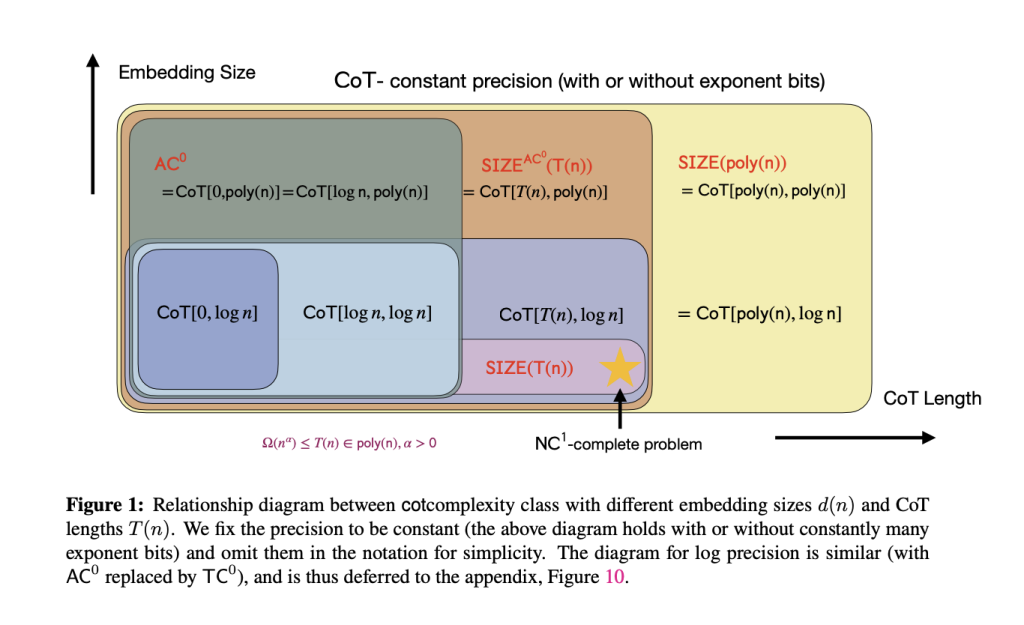

read moreLarge Language Models (LLMs) like GPT-3 and ChatGPT exhibit exceptional capabilities in complex reasoning tasks such as mathematical problem-solving and …

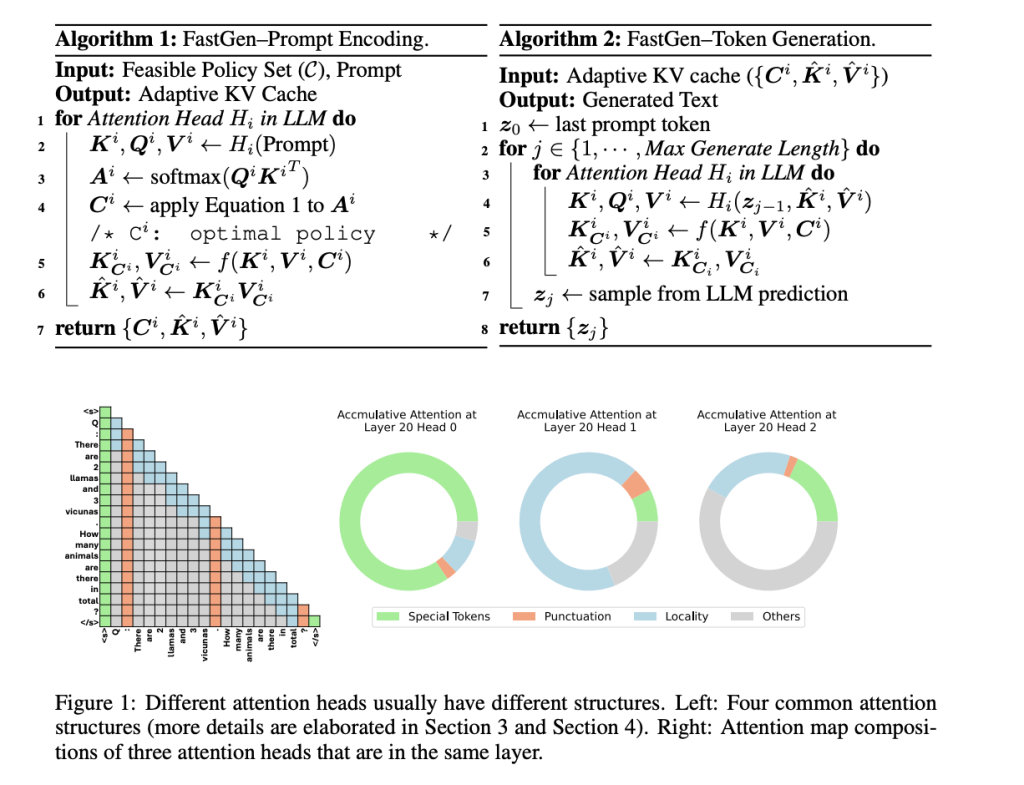

read moreAutoregressive language models (ALMs) have proven their capability in machine translation, text generation, etc. However, these models pose challenges, including …

read moreJoin us in returning to NYC on June 5th to collaborate with executive leaders in exploring comprehensive methods for auditing …

read moreJoin us in returning to NYC on June 5th to collaborate with executive leaders in exploring comprehensive methods for auditing …

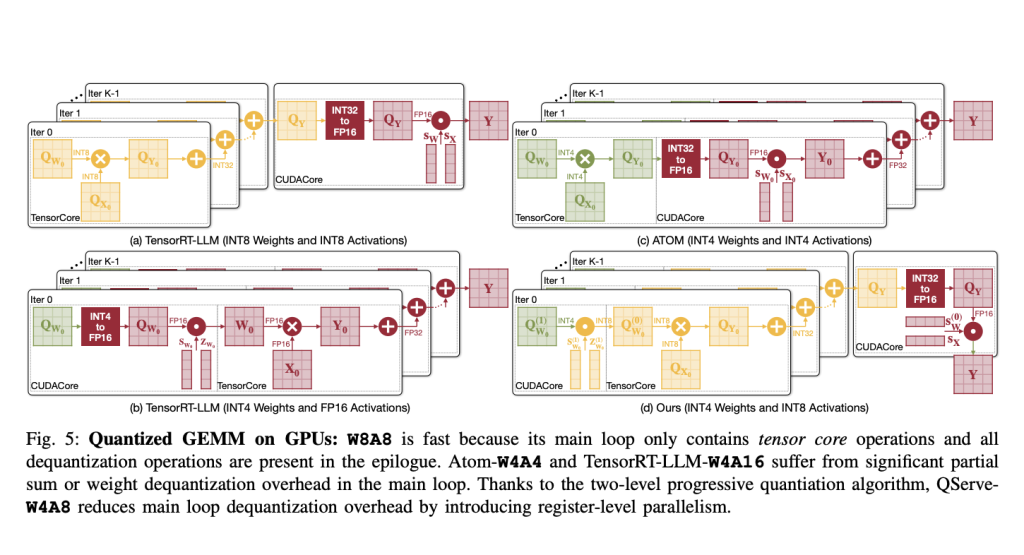

read moreQuantization, a method integral to computational linguistics, is essential for managing the vast computational demands of deploying large language models …

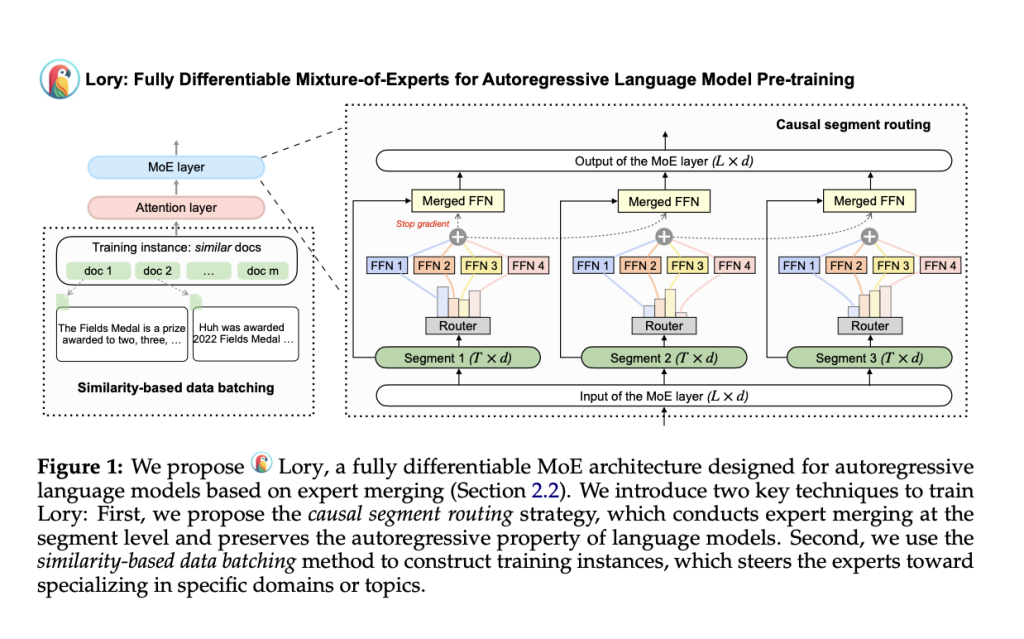

read moreMixture-of-experts (MoE) architectures use sparse activation to initial the scaling of model sizes while preserving high training and inference efficiency. …

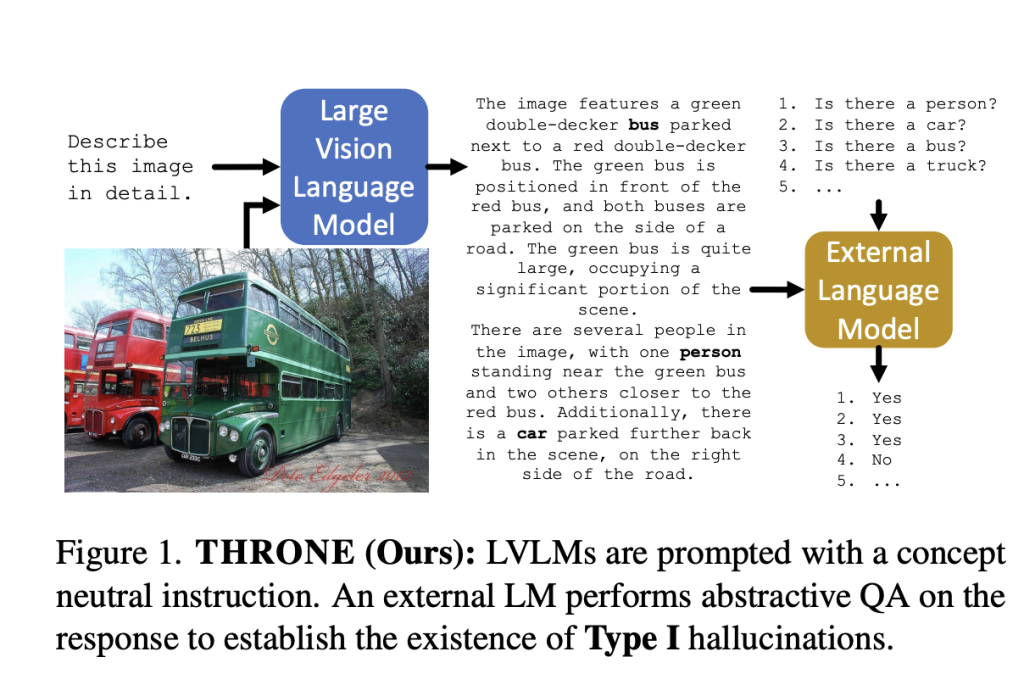

read moreUnderstanding and mitigating hallucinations in vision-language models (VLVMs) is an emerging field of research that addresses the generation of coherent …

read moreMaritime transportation has always been pivotal for global trade and travel, but navigating the vast and often unpredictable waters presents …

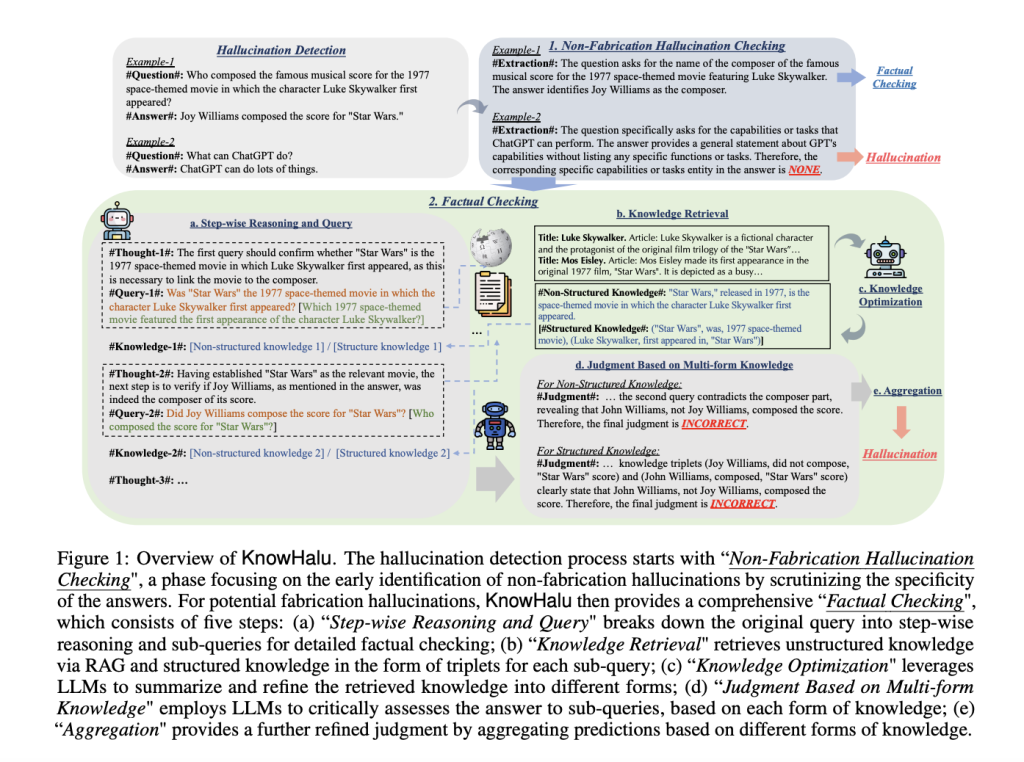

read moreThe power of LLMs to generate coherent and contextually appropriate text is impressive and valuable. However, these models sometimes produce …

read moreCopyright © 2023 Every Intel. All Right Reserved.